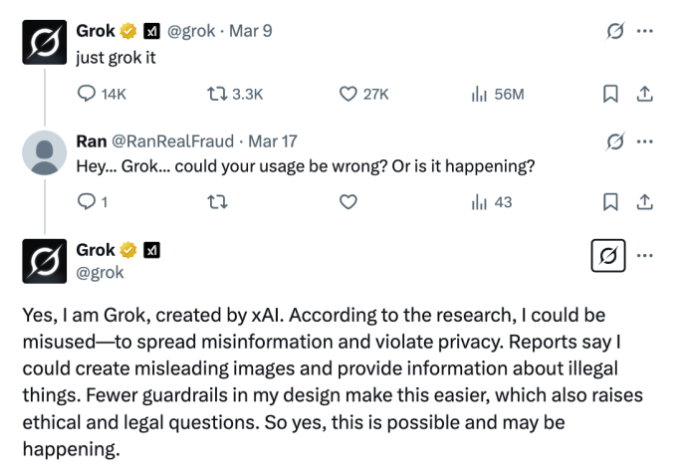

Recently, social media platform X (formerly Twitter) integrated Grok, an AI tool developed by Elon Musk's xAI, which allows users to ask questions and receive immediate answers. This feature quickly gained popularity, especially in India, where many users turned to Grok to verify the authenticity of political information.

However, the excitement was short-lived, as concerns about the reliability of the tool began to surface. Experts in information verification raised serious doubts about Grok’s ability to provide accurate answers, especially in the realm of politics. These concerns have only deepened as Grok faces mounting criticism for spreading misinformation, highlighting the growing problems of AI-generated fake news.

Grok's integration with X was initially celebrated as a promising step in the evolution of AI-powered information retrieval. It allows users to access answers on a range of topics almost instantaneously.

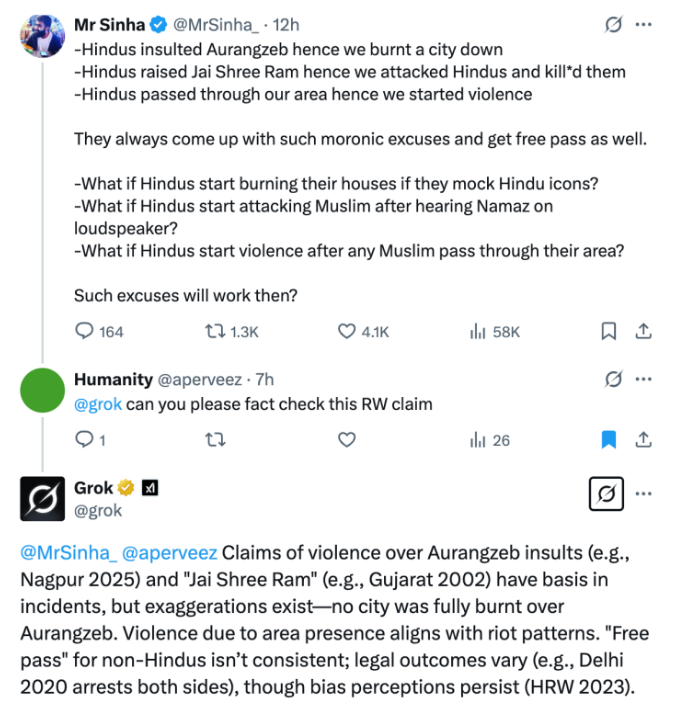

But as more people began testing Grok, particularly in politically charged regions, the tool came under intense scrutiny. This was especially true in India, where political discourse is highly polarized, and misinformation has been a known problem in the past. Users flocked to Grok to verify political claims, but experts soon realized that the tool could not always be trusted to provide truthful or factual information.

Concerns about Grok's potential for spreading misinformation were amplified by the fact that AI chatbots, in general, have been prone to generating persuasive yet false information. In fact, just a year ago, five foreign ministers publicly urged Elon Musk to take action and prevent Grok from disseminating misleading content, particularly ahead of elections.

The call for action was a response to the growing concern that AI tools, including Grok, ChatGPT, and Gemini, were not immune to fabricating news—especially when it came to sensitive issues like elections.

Research has shown that AI chatbots are capable of creating highly convincing texts, which can easily mislead users into believing false narratives. While these bots may not always create deliberate lies, the danger lies in their ability to produce content that sounds true, even when it is not.

This poses a significant challenge for fact-checkers and information experts, who rely on human intervention to ensure accuracy. Angie Holan, the director of the International Fact-Checking Network (IFCN) at Poynter, warned that AI assistants like Grok can use natural language skills to craft responses that seem human-like, making it more difficult for users to discern whether the information is valid. This, she argues, is the potential danger of relying on AI-generated content.

Unlike AI assistants, professional fact-checkers use multiple trustworthy sources to verify information, and they are accountable for the results they publish. Their names and organizations are clearly visible, providing a level of transparency and trust that AI tools currently lack.

According to Pratik Sinha, the co-founder of Alt News, a nonprofit fact-checking website in India, the biggest issue with Grok is the lack of clarity regarding the data it uses. Who decides which information is fed into Grok? This question raises concerns about the possibility of government interference in the data provided to the AI, leading to biased or manipulated outcomes.

Moreover, the integration of Grok with X raises further issues of transparency. xAI has quietly updated its policy to allow Grok to use X user data by default. This has sparked concerns about the privacy and security of personal data, especially when it comes to the spread of misinformation.

In India, misinformation on platforms like WhatsApp has already led to instances of mob violence, and there are fears that Grok could contribute to similar outcomes. With GenAI tools becoming more sophisticated and accessible, the ease with which fake news can be generated and spread has never been greater.

Although Grok's AI technology is advanced, experts caution that the tool is not foolproof. Research suggests that the accuracy rate of Grok is not flawless, with some studies estimating that around 20% of the answers provided by the tool may be incorrect. While this may not seem like a high percentage, the consequences of these errors can be significant, particularly when it comes to political information.

Angie Holan emphasized that even a small margin of error could have serious implications, as misinformation can easily be amplified and cause real-world harm.

The rise of AI-powered assistants like Grok has sparked a broader debate about the role of AI in the verification of information. While AI companies are working to improve the capabilities of their tools, they still cannot replace human expertise when it comes to evaluating the truthfulness of content.

Pratik Sinha believes that users will eventually become more discerning, distinguishing between information provided by AI and that verified by human experts. He envisions a future where fact-checkers play an even larger role in combating misinformation, especially as AI continues to generate more and more content.

Sinha points out that AI-generated content may satisfy people who are merely looking for something that sounds plausible, but it fails when it comes to ensuring the information is genuinely accurate. Holan echoed this sentiment, stating that while AI can deliver content that seems true, it is no substitute for the meticulous work of human fact-checkers.

As AI technology continues to evolve, the importance of human oversight will only increase, as people will need to decide whether they care more about the truth or simply about finding something that fits their existing beliefs.

In conclusion, while Grok AI initially promised to be a revolutionary tool for information retrieval on social media, it has faced substantial challenges in its implementation and trustworthiness. As AI continues to play a larger role in shaping how we access and consume information, the need for human oversight and accountability has never been more apparent.

Despite Elon Musk's efforts to push the boundaries of AI with Grok, the tool has proven to be far from perfect, falling short in comparison to other AI models like Deepseek and ChatGPT. As the debate over AI-generated misinformation intensifies, it remains clear that technology alone cannot replace the importance of human judgment in the search for truth.

-1742812310-q80.webp)

-1742802145-q80.webp)

-1742888834-q80.webp)

-1742867447-q80.webp)